I’ve been exploring the concept of treating software builds as micro-services. The reasons are below.

- Portability: Just do a docker run and you’ve got a clean environment to build your software!

- Scale : The whole master and agent type of CI infrastructure has some limitations as the master must track the state of all the agents and pick the right type of agents to build on. It’s really just another layer to manage.

- Versioning : Build components are defined in Docker files versioned in git. If I need to build on version X of Java, I just pull it in to my build with docker run as opposed to installing it on a system and sorting out which system has the appropriate version at build time. Try doing that simply with a classic CI server.

I’m going to show that you can deliver software using CI methodologies using a pipeline while excluding a traditional CI server. I have authored and contributed to plugins for Jenkins, so you may find it odd that I’m challenging the position of a classic CI server. However, there are simply opportunities to do things differently and perhaps better.

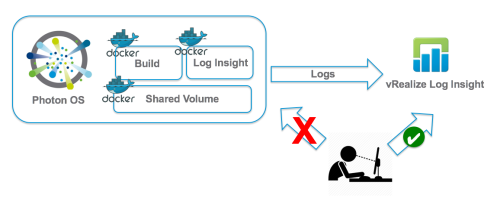

In this case I’ll show you how to build in a micro-service fashion using VMware’s Code Stream, Photon OS and Log Insight!

No Humans allowed!

The key to a consistent build is keeping people out of the gears. Traditionally, log analysis has kept people logging into servers and introducing a risk of drift. At best the logs were dumped onto something like Jenkins and developers would download the logs to their desktop, sifting them with notepad.

First things first, we must address the build logs!

Log Insight is a great tool for centralizing logs and viewing their contents. We’ll need to turn the Log Insight agent into a container. Take a peak at my Log Insight agent on Docker Hub. It’s based on the photon os image on docker hub.

Don’t Blink! Well…Go ahead and blink it’s OK.

The next challenge is to treat the build steps as processes. Containers are perfect for this. A container returns an exit code of the process it’s running. It will only exist for the duration of the process! For a build I need at least two processes. A git clone to download the code and a maven process to build the code. Once again, I have both git and maven on docker hub to serve as examples.

Volumes!

Now that I have 3 images, a git image, maven image and a log insight image, I need a way to share the data between them. A shared volume works perfectly for this.

Got the picture? Now here is the frame.

Pipeline!

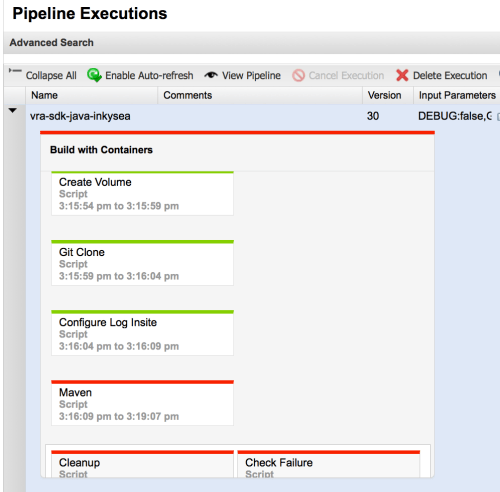

I use code stream to create the containers on Photon OS and manage the stage based on the exit status of each process run. Note that the volume and Log Insight container persist until I have them cleaned up once I’m done with the build.

How do I trigger the pipeline? With a webhook on the git server. Every time a code commit occurs, the webhook tells the pipeline to build. This is perfect for an agile environment that treats every commit as a potential release candidate.

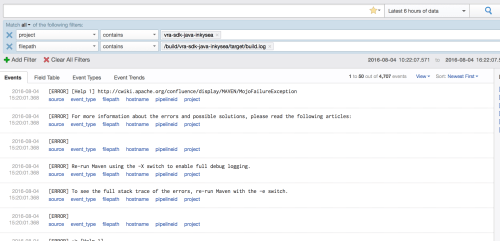

As you can see below, my maven build failed. That’s cool because I can now look at Log Insight and see my maven build log and surefire JUnit tests to figure out where the problem is.

Visibility!

Inside log insight I can now search for my project using tags created when I instantiated my Log Insight agent during the build. In this case, my pipeline configures the log insight agent to create a “project” tag in Log Insight. The project is named after the code project. I can also add a “buildNumber” tag to search for a particular build.

I can then view the entries in their context, which gives me all the data I need to know why the build failed.

Once the logs are in Log Insight I can get fancy with dashboards collecting metrics and also alerting if certain events are logged. Pretty slick!

Want to learn more?

I’ll be speaking at VMworld 2016 on this topic. I’m partnering with Ryan Kelly on “vRA, API, CI, Oh My!” If you are attending VMworld, stop by and find me. If you aren’t attending, I’ll post more information after VMworld.